前言

本文的文字及图片来源于网络,仅供学习、交流使用,不具有任何商业用途,版权归原作者所有,如有问题请及时联系我们以作处理。

一个简单的Python资讯采集案例,列表页到详情页,到数据保存,保存为txt文档,网站网页结构算是比较规整,简单清晰明了,资讯新闻内容的采集和保存!

应用到的库

requests,time,re,UserAgent,etree

import requests,time,re

from fake_useragent import UserAgent

from lxml import etree

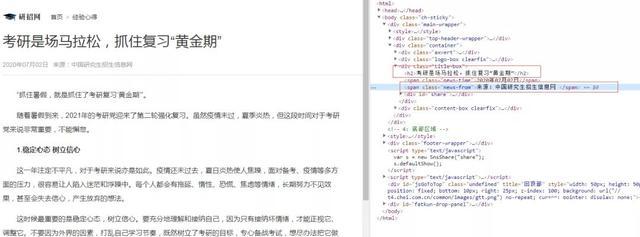

列表页面

列表页,链接xpath解析

href_list=req.xpath('//ul[@class="news-list"]/li/a/@href')详情页

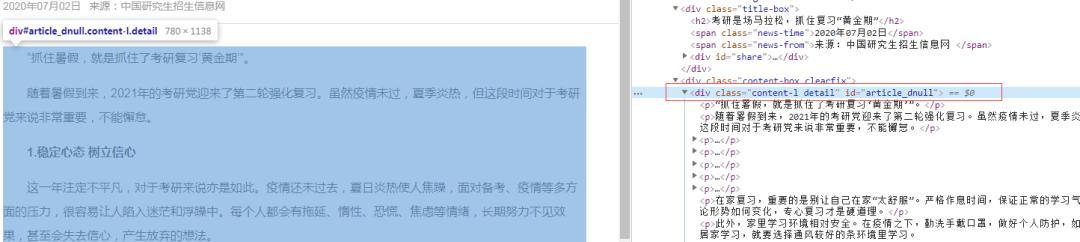

内容xpath解析

h2=req.xpath('//div[@class="title-box"]/h2/text()')[0]

author=req.xpath('//div[@class="title-box"]/span[@class="news-from"]/text()')[0]

details=req.xpath('//div[@class="content-l detail"]/p/text()')

内容格式化处理

detail='\n'.join(details)

标题格式化处理,替换非法字符

pattern = r"[\/\\\:\*\?\"\<\>\|]"

new_title = re.sub(pattern, "_", title) # 替换为下划线

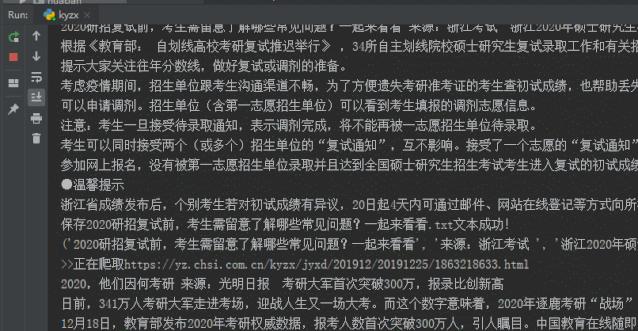

保存数据,保存为txt文本

def save(self,h2, author, detail):

with open(f'{h2}.txt','w',encoding='utf-8') as f:

f.write('%s%s%s%s%s'%(h2,'\n',detail,'\n',author))print(f"保存{h2}.txt文本成功!")

遍历数据采集,yield处理

def get_tasks(self):

data_list = self.parse_home_list(self.url)

for item in data_list:

yield item程序运行效果

程序采集效果

附源码参考:

# -*- coding: UTF-8 -*- import requests,time,re from fake_useragent import UserAgent from lxml import etree class RandomHeaders(object): ua=UserAgent() @property def random_headers(self): return { 'User-Agent': self.ua.random, } class Spider(RandomHeaders): def __init__(self,url): self.url=url def parse_home_list(self,url): response=requests.get(url,headers=self.random_headers).content.decode('utf-8') req=etree.HTML(response) href_list=req.xpath('//ul[@class="news-list"]/li/a/@href') print(href_list) for href in href_list: item = self.parse_detail(f'https://yz.chsi.com.cn{href}') yield item def parse_detail(self,url): print(f">>正在爬取{url}") try: response = requests.get(url, headers=self.random_headers).content.decode('utf-8') time.sleep(2) except Exception as e: print(e.args) self.parse_detail(url) else: req = etree.HTML(response) try: h2=req.xpath('//div[@class="title-box"]/h2/text()')[0] h2=self.validate_title(h2) author=req.xpath('//div[@class="title-box"]/span[@class="news-from"]/text()')[0] details=req.xpath('//div[@class="content-l detail"]/p/text()') detail='\n'.join(details) print(h2, author, detail) self.save(h2, author, detail) return h2, author, detail except IndexError: print(">>>采集出错需延时,5s后重试..") time.sleep(5) self.parse_detail(url) @staticmethod def validate_title(title): pattern = r"[\/\\\:\*\?\"\<\>\|]" new_title = re.sub(pattern, "_", title) # 替换为下划线 return new_title def save(self,h2, author, detail): with open(f'{h2}.txt','w',encoding='utf-8') as f: f.write('%s%s%s%s%s'%(h2,'\n',detail,'\n',author)) print(f"保存{h2}.txt文本成功!") def get_tasks(self): data_list = self.parse_home_list(self.url) for item in data_list: yield item if __name__=="__main__": url="https://yz.chsi.com.cn/kyzx/jyxd/" spider=Spider(url) for data in spider.get_tasks(): print(data)以上就是本文的全部内容,希望对大家的学习有所帮助,也希望大家多多支持python博客。

-

<< 上一篇 下一篇 >>

标签:requests

Python爬虫爬取新闻资讯案例详解

看: 1797次 时间:2020-08-26 分类 : python爬虫

- 相关文章

- 2021-07-20Python爬虫基础之爬虫的分类知识总结

- 2021-07-20Python爬虫基础讲解之请求

- 2021-07-20PyQt5爬取12306车票信息程序的实现

- 2021-07-20Python爬虫之m3u8文件里提取小视频的正确姿势

- 2021-07-20如何用python抓取B站数据

- 2021-07-20快速搭建python爬虫管理平台

- 2021-07-20Python爬虫之获取心知天气API实时天气数据并弹窗提醒

- 2021-07-20Python爬虫之批量下载喜马拉雅音频

- 2021-07-20python使用pywinauto驱动微信客户端实现公众号爬虫

- 2021-07-20Requests什么的通通爬不了的Python超强反爬虫方案!

-

搜索

-

-

推荐资源

-

Powered By python教程网 鲁ICP备18013710号

python博客 - 小白学python最友好的网站!