前言最近在学习过程中需要用到pytorch框架,简单学习了一下,写了一个简单的案例,记录一下pytorch中搭建一个识别网络基础的东西。对应一位博主写的tensorflow的识别mnist数据集,将其改为pytorch框架,也可以详细看到两个框架大体的区别。

Pytorch实战mnist手写数字识别

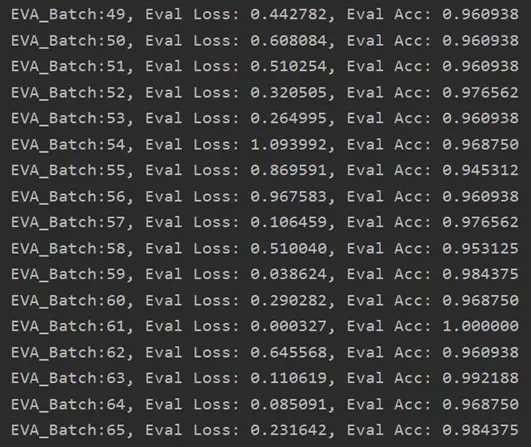

#需要导入的包 import torch import torch.nn as nn#用于构建网络层 import torch.optim as optim#导入优化器 from torch.utils.data import DataLoader#加载数据集的迭代器 from torchvision import datasets, transforms#用于加载mnsit数据集 #下载数据集 train_set = datasets.MNIST('./data', train=True, download=True,transform = transforms.Compose([ transforms.ToTensor(), transforms.Normalize((0.1037,), (0.3081,)) ])) test_set = datasets.MNIST('./data', train=False, download=True,transform = transforms.Compose([ transforms.ToTensor(), transforms.Normalize((0.1037,), (0.3081,)) ])) #构建网络(网络结构对应tensorflow的那一篇文章) class Net(nn.Module): def __init__(self, num_classes=10): super(Net, self).__init__() self.features = nn.Sequential( nn.Conv2d(1, 32, kernel_size=5, stride=1, padding=2), nn.MaxPool2d(kernel_size=2,stride=2), nn.Conv2d(32, 64, kernel_size=5, stride=1, padding=2), nn.MaxPool2d(kernel_size=2,stride=2), ) self.classifier = nn.Sequential( nn.Linear(3136, 7*7*64), nn.Linear(3136, num_classes), ) def forward(self,x): x = self.features(x) x = torch.flatten(x, 1) x = self.classifier(x) return x net=Net() net.cuda()#用GPU运行 #计算误差,使用adam优化器优化误差 criterion = nn.CrossEntropyLoss() optimizer = optim.Adam(net.parameters(), 1e-2) train_data = DataLoader(train_set, batch_size=128, shuffle=True) test_data = DataLoader(test_set, batch_size=128, shuffle=False) #训练过程 for epoch in range(1): net.train() ##在进行训练时加上train(),测试时加上eval() batch = 0 for batch_images, batch_labels in train_data: average_loss = 0 train_acc = 0 ##在pytorch0.4之后将Variable 与tensor进行合并,所以这里不需要进行Variable封装 if torch.cuda.is_available(): batch_images, batch_labels = batch_images.cuda(),batch_labels.cuda() #前向传播 out = net(batch_images) loss = criterion(out,batch_labels) average_loss = loss prediction = torch.max(out,1)[1] # print(prediction) train_correct = (prediction == batch_labels).sum() ##这里得到的train_correct是一个longtensor型,需要转换为float train_acc = (train_correct.float()) / 128 optimizer.zero_grad() #清空梯度信息,否则在每次进行反向传播时都会累加 loss.backward() #loss反向传播 optimizer.step() ##梯度更新 batch+=1 print("Epoch: %d/%d || batch:%d/%d average_loss: %.3f || train_acc: %.2f" %(epoch, 20, batch, float(int(50000/128)), average_loss, train_acc)) # 在测试集上检验效果 net.eval() # 将模型改为预测模式 for idx,(im1, label1) in enumerate(test_data): if torch.cuda.is_available(): im, label = im1.cuda(),label1.cuda() out = net(im) loss = criterion(out, label) eval_loss = loss pred = torch.max(out,1)[1] num_correct = (pred == label).sum() acc = (num_correct.float())/ 128 eval_acc = acc print('EVA_Batch:{}, Eval Loss: {:.6f}, Eval Acc: {:.6f}' .format(idx,eval_loss , eval_acc))运行结果:

到此这篇关于Pytorch框架实现mnist手写库识别(与tensorflow对比)的文章就介绍到这了,更多相关Pytorch框架实现mnist手写库识别(与tensorflow对比)内容请搜索python博客以前的文章或继续浏览下面的相关文章希望大家以后多多支持python博客!

-

<< 上一篇 下一篇 >>

Pytorch框架实现mnist手写库识别(与tensorflow对比)

看: 1544次 时间:2020-08-26 分类 : python教程

- 相关文章

- 2021-12-20Python 实现图片色彩转换案例

- 2021-12-20python初学定义函数

- 2021-12-20图文详解Python如何导入自己编写的py文件

- 2021-12-20python二分法查找实例代码

- 2021-12-20Pyinstaller打包工具的使用以及避坑

- 2021-12-20Facebook开源一站式服务python时序利器Kats详解

- 2021-12-20pyCaret效率倍增开源低代码的python机器学习工具

- 2021-12-20python机器学习使数据更鲜活的可视化工具Pandas_Alive

- 2021-12-20python读写文件with open的介绍

- 2021-12-20Python生成任意波形并存为txt的实现

-

搜索

-

-

推荐资源

-

Powered By python教程网 鲁ICP备18013710号

python博客 - 小白学python最友好的网站!